AI is transforming low-code platforms by optimizing resource allocation in real time, improving efficiency in development workflows, and reducing project delays. Here's what you need to know:

- Core Benefits: AI predicts workload demands, assigns tasks, and monitors capacity to ensure efficient use of resources like developer time, budgets, and computing power.

- Key Features: Platforms integrate AI tools for automation, natural language queries, and governance, helping businesses complete projects faster and more cost-effectively.

- Platform Highlights:

- Appsmith: Simplifies AI integration with native tools, supports automation via AI Copilot, and offers self-hosting for control.

- OutSystems: Uses AI to guide app development, automate validation, and scale infrastructure seamlessly.

- Mendix: Combines embedded machine learning and API-based AI for faster development and better resource management.

- Appian: Focuses on workflow automation and secure AI deployments with Private AI architecture.

- Retool: Offers flexible AI integrations and governance for building internal tools with minimal effort.

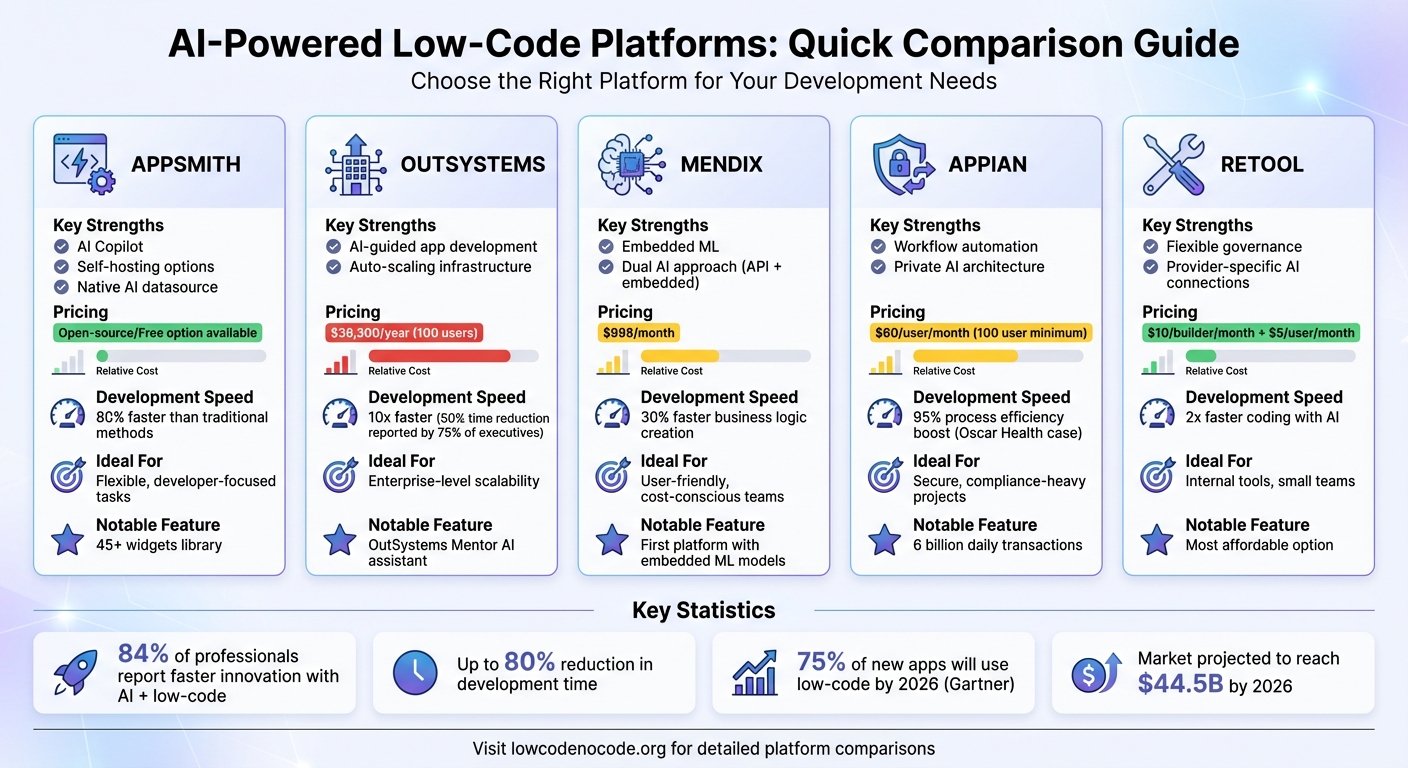

Quick Comparison

| Platform | Key Strengths | Pricing (Starting) | Ideal For |

|---|---|---|---|

| Appsmith | AI Copilot, self-hosting options | Open-source/free option | Flexible, developer-focused tasks |

| OutSystems | AI-guided app development | $36,300/year (100 users) | Enterprise-level scalability |

| Mendix | Embedded ML, dual AI approach | $998/month | User-friendly, cost-conscious teams |

| Appian | Workflow automation, Private AI | $60/user/month | Secure, compliance-heavy projects |

| Retool | Flexible governance, low cost | $10/builder/month | Internal tools, small teams |

AI in low-code platforms is helping businesses achieve faster development times - up to 80% quicker - while addressing challenges like capacity visibility and resource allocation. Choosing the right platform depends on your specific needs, budget, and project complexity.

Low-Code AI Platform Comparison: Features, Pricing, and Ideal Use Cases

1. Appsmith

AI Integration Methods

Appsmith makes it easy for developers to integrate AI into their projects with its native AI datasource. This built-in feature eliminates the hassle of managing external API keys or complex authentication processes. With it, users can access tools for text generation, classification, and image analysis directly from the platform. To handle unstructured data, Appsmith utilizes RAG (Retrieval-Augmented Generation), which organizes and indexes data in a vector database, ensuring only the most relevant information is retrieved for each query.

For structured data, the platform uses Function Calling, allowing AI agents to interact directly with SQL/NoSQL databases and APIs. This enables real-time actions, such as updating records or initiating workflows based on AI-driven insights. Additionally, Appsmith supports file uploads up to 20 MB, providing context for text generation tasks. The platform is compatible with major language model providers, including OpenAI, Anthropic, and Google AI.

"Appsmith is committed to providing safe and responsible access to AI capabilities. Your prompts, outputs, embeddings, and data are not shared with other users and are never utilized to fine-tune models." - Appsmith Documentation

These features make Appsmith a powerful tool for integrating AI into development workflows, saving time and effort.

Automation Capabilities

Appsmith extends its AI integration with tools that simplify coding and workflow automation. Its AI Copilot can generate JavaScript functions, SQL queries, and even custom widgets based on natural language prompts, cutting down on manual coding. For example, GSK used Appsmith to develop an application that automated patching across 3,500 Linux servers in just one development sprint. Similarly, Block (formerly Square) sped up customer support processes, reducing the time needed to process new applications by 50%.

The platform’s Workflows feature enables developers to automate complex processes from start to finish, incorporating human-in-the-loop logic when necessary. A centralized JavaScript IDE ensures that AI queries and business rules are coordinated efficiently, avoiding code fragmentation. According to Appsmith, developers can create custom AI applications 80% faster than with traditional methods. Companies like HeyJobs have reported adding new features 90% faster after updating their legacy applications with Appsmith.

Infrastructure Optimization

Appsmith gives teams full control over their AI deployments with self-hosting options through Docker or Kubernetes. Its integrated Postgres database can also function as a vector store for AI applications, simplifying the deployment of self-hosted knowledge bases. Recent updates have dramatically improved Git sync performance, making it 60 times faster, which helps teams manage versions and deploy updates across development, staging, and production environments more efficiently.

These improvements have tangible benefits. For instance, F22 Labs saved $1,200 per month by creating custom extensions for their project management platform using Appsmith, eliminating the need for extra seat licenses. Developers can also take advantage of a library of over 45 widgets, including tables, charts, and forms, which can be directly linked to AI query results, streamlining interface creation.

Governance and Scalability

Appsmith ensures secure and efficient management of resources with robust governance and scalability features. The platform offers Role-Based Access Controls (RBAC), SAML and OIDC SSO, and SCIM-based user provisioning to manage access to AI models and data at an enterprise level. Comprehensive audit logging tracks AI interactions and usage patterns, helping teams address issues and optimize performance. Appsmith is also SOC 2 Type II certified and supports air-gapped deployments for environments requiring the highest levels of security.

"Define trusted logic with functions and data sources to eliminate AI guesses and hallucinations. Responses include automatic citations pulled directly from your data." - Appsmith

Used by over 10,000 teams worldwide, including GSK, Dropbox, and AWS, Appsmith has proven its reliability. For example, Funding Societies developed an AI-powered assistant on the platform that significantly reduced response times for customer inquiries. As an open-source platform under the Apache 2.0 license, Appsmith avoids vendor lock-in while offering full customization and transparency for security audits.

sbb-itb-3a330bb

2. OutSystems

AI Integration Methods

OutSystems employs OutSystems Mentor, an AI-powered digital assistant that guides app development from the initial prototype to final deployment. This platform uses an AI framework that assigns specialized agents to handle various stages, such as ideation, design, deployment, and monitoring. Its OutSystems Modeling Language (OML) acts as a blueprint, readable by AI, to ensure applications function as intended and meet precise requirements. Developers can integrate AI models from providers like Azure OpenAI or use custom models through the OutSystems Developer Cloud (ODC), which simplifies the management and reuse of AI tools across projects. Additionally, the AI Agent Builder, available on Forge, allows teams to create tailored agents that connect to enterprise data sources like Azure AI Search or Amazon Kendra - all without needing to write custom code or using no-code platforms.

"OutSystems quickly rose to the top of our list as the leading AI-powered low-code platform... we're confident that with OutSystems we'll be able to deliver powerful, automated solutions." - Howard Miller, CIO, UCLA Anderson School of Management

This integrated approach streamlines development and ensures a smooth, automated workflow.

Automation Capabilities

OutSystems significantly reduces development time by leveraging AI-driven automation throughout the entire lifecycle. According to data, 75% of software executives reported cutting development time by up to 50%. Features like inline suggestions in Service Studio simplify logic flows, reducing mental effort and speeding up coding. Automated validation tools, running every 12 hours, identify potential performance issues, security risks, and architectural challenges. These tools free developers from repetitive tasks, allowing them to focus on higher-value activities.

Practical examples highlight its impact. Roche, for instance, launched "Roche Chat", an app offering real-time employee support and custom AI agents, in record time during 2024. Similarly, AmTrust developed six applications in just 15 months by following AI-guided recommendations.

"These new capabilities in terms of generative AI are already helping a lot of the development teams to create things differently and to be more productive. So everybody wins." - João Copeto, CIO, Benfica

Research shows that organizations using AI in development see better results, with 56% reporting fewer bugs and improved performance. A Forrester study also found a 363% ROI for businesses leveraging OutSystems' AI tools.

Infrastructure Optimization

OutSystems offers a cloud-native infrastructure with built-in features like auto-scaling and load balancing, removing the need for manual resource management. The platform supports AI Agent High Availability setups, enabling multiple deployments of AI models to handle high-demand periods efficiently. Through the ODC dashboard, teams can track token usage, request volumes, and AI performance, helping them manage costs effectively. Real-time monitoring further aids in identifying inefficiencies and optimizing expenses early on.

"When developers configure the agent, the platform ensures that the data doesn't contain sensitive data that an organization would not want exposed. It also monitors things like token usage so users are aware of the costs." - Rodrigo Coutinho, Co-Founder and AI Product Manager, OutSystems

Governance and Scalability

OutSystems complements its automation and infrastructure capabilities with robust governance and scalability features. The Developer Cloud centralizes AI model management, providing unified access controls and monitoring tools. Administrators can set daily token limits for AI models, ensuring predictable usage and preventing unexpected expenses as projects grow. The platform also integrates regulatory compliance tools for standards like GDPR, HIPAA, and PCI, ensuring secure and lawful resource allocation. The Mentor tool plays a key role in governance, automatically reviewing AI-generated code for vulnerabilities and dependencies while validating outputs against organizational standards.

With 84% of companies incorporating AI into their development processes within the last six months to five years, OutSystems is well-equipped to support this trend.

"75% of OutSystems customers are still in the beginning stage of generative AI implementation, and we want to help them meet the moment." - Paulo Rosado, Founder and Chairman of the Board, OutSystems

3. Mendix

AI Integration Methods

Mendix incorporates Maia, an AI suite within Studio Pro, to assist developers throughout the software development lifecycle. What makes Mendix stand out is its dual approach: combining API-based AI with embedded machine learning. Maia connects to top-tier models like Claude (via Amazon Bedrock) and Llama 3.1 8B, enabling tasks such as generating domain models, pages, and test data from text descriptions or images. Additionally, the platform’s ML Kit embeds ONNX models directly into the app's JVM, reducing latency and removing the need for external hosting.

The Logic Recommender acts as an AI co-developer, analyzing patterns from over 12 million anonymized applications to suggest optimal actions for microflows and workflows with 95% accuracy. Unlike many platforms that rely solely on cloud-based AI, Mendix is the first low-code platform to support embedded machine learning models within applications.

"Mendix is the first and only low-code platform to support the embedded integration of ML models into your applications... the ML Kit enables your AI models to run inside the confines of your environment." – Mendix Evaluation Guide

This integrated AI approach lays the groundwork for faster development, as highlighted in its automation tools.

Automation Capabilities

Mendix offers automation features that significantly speed up development. The Maia Logic Recommender, for instance, provides real-time, context-driven suggestions, helping developers create business logic up to 30% faster by leveraging insights from over 40 activity options. The Start with Maia feature takes automation a step further by generating entire application foundations - like domain models, pages, and test data - from simple text descriptions, PDFs, or images, drastically reducing the time required for initial development.

To manage resources effectively, a Token Consumption Monitor tracks AI usage and associated costs. Additionally, built-in automation features like auto-provisioning and auto-healing ensure minimal downtime during the development process.

"Use Maia Logic Recommender for real-time context-driven recommendations to build business logic up to 30% faster." – Mendix Platform Page

Infrastructure Optimization

Mendix's architecture is designed for efficiency. By embedding machine learning models and using a cloud-native, containerized setup, the platform reduces latency and API expenses. This flexibility allows for seamless deployment across cloud or on-premises environments, making it ideal for applications requiring high performance or heightened privacy.

The Mendix Cloud Gen AI Resource Packs simplify AI resource management by offering direct connectivity to leading models via Amazon Bedrock, removing the need for manual setup or configuration. For developers working on conversational AI, the Agent Builder Starter App supports retrieval-augmented generation (RAG) architectures. This feature enables AI models to access real-time, company-specific knowledge bases, improving the relevance and accuracy of responses. These infrastructure optimizations ensure efficient resource use, minimizing overhead while improving performance.

Governance and Scalability

Mendix ensures transparency and maintainability through a model-driven development approach. Unlike code-generation tools that produce hard-to-trace outputs, Mendix uses model-driven abstractions, making AI-generated components predictable and easy to manage within enterprise systems. The Best Practice Recommender further enhances code quality by identifying and resolving development anti-patterns in real time.

The platform also emphasizes data privacy. Pre-trained large language models (LLMs) are used alongside customizable content filters to flag biases or inappropriate content. The Token Consumption Monitor helps control costs, ensuring that AI usage remains efficient and affordable.

"What Mendix gave us was a fast track for development of the smaller demands, or the things that can be done in an agile way with rapid prototyping." – Mark Vermeer, CIO, City of Rotterdam

As the low-code application platform market is projected to grow by around 30% in the coming years, Mendix’s governance framework positions organizations to scale AI solutions responsibly. By balancing cost control, data privacy, and quality assurance, Mendix provides a robust foundation for secure and scalable development practices.

4. Appian

AI Integration Methods

Appian employs a layered approach to incorporating AI, using three distinct tiers. The first tier involves rules-based automation, ideal for handling high-volume, predictable tasks. The second tier, AI Skills, focuses on specific cognitive functions like document summarization and identifying personally identifiable information (PII). Appian Cloud offers 11 embedded skills to support these tasks. The third tier, AI Agents, tackles more complex, multi-step reasoning processes.

What sets Appian apart is its Private AI architecture, which ensures that enterprise data stays within Appian Cloud, avoiding exposure to external large language model providers. Additionally, Appian's Data Fabric acts as a virtual layer, allowing AI to interact with various data sources without requiring extensive data migration or system overhauls.

"Process HQ integrates Appian Data Fabric to reduce manual data prep and is unified with Appian process automation so getting from insight to action has never been easier." – Michael Beckley, CTO & Founder, Appian

These tools - Private AI and Data Fabric - serve as the backbone for Appian's automation and scalability, streamlining operations and enhancing efficiency.

Automation Capabilities

Appian's AI Copilot significantly lightens the workload for developers by automating key tasks. It can transform business requirements into complete application plans, convert PDF forms into interface designs in seconds, and generate realistic sample data paired with detailed unit tests. These unit tests even account for edge cases, such as null values. Its process modeler uses anonymized historical data to predict the most likely next steps, reducing time spent navigating development options.

A real-world example of Appian's automation in action is Oscar Health's implementation of Process HQ in April 2024. This system automated data analysis for daily service team improvements, focusing on identifying problem areas, conducting root cause analysis, and tracking countermeasures. The result? A reported 95% boost in process efficiency.

For more autonomous operations, AI Agents handle intricate tasks like case routing and record updates without human input. These agents are capped at 30 tool calls per run and have a maximum execution time of 3 minutes to ensure system performance remains stable.

These automation tools seamlessly integrate into Appian's broader infrastructure management capabilities.

Infrastructure Optimization

Appian's Autoscale feature dynamically adjusts processing power based on demand, offering up to 100 times the capacity needed for high-volume automation without requiring manual adjustments. The platform processes over 6 billion transactions daily across 63 availability zones, with a maximum process initiation rate of 700 per minute. To maintain efficiency, Appian enforces limits on process variables (5 MB), automation node timeouts (90 seconds), and system queue capacity (one million entries).

The platform's optimization capabilities have delivered tangible results. For instance, PwC reported a 30% improvement in claims handling efficiency, while the Texas Department of Public Safety streamlined its contract award process from start to finish.

Governance and Scalability

Appian ensures that its AI features align with existing enterprise security measures. AI outputs automatically follow record-level and row-level permissions, while system-level audit logs track all AI activities. Optional logging of AI inputs and outputs is also available for compliance purposes. Developers benefit from a complete version history for AI agents, making it easy to review changes or revert to earlier configurations.

The platform's scalability is exemplified by Acclaim Autism, which revamped its patient intake process. This transformation reduced wait times from months to days, increased monthly onboarding capacity by 15 times, and cut staff attrition by over 50%. For cost management, Appian tracks resource usage through "AI actions", which are calculated based on prompt length, tool descriptions, and response generation. Administrators can use the Monitor tab to analyze usage patterns and identify areas for improvement.

Building Low Code AI Applications [Pt 10] | Generative AI for Beginners

5. Retool

Retool stands out among low-code platforms by combining AI-driven resource management with integration and governance features designed to streamline development.

AI Integration Methods

Retool takes a focused approach to AI integration, offering provider-specific connections. As of February 23, 2026, users can configure separate connections for OpenAI, Anthropic, and AWS Bedrock, with support for multiple API keys per provider. This setup allows organizations to allocate access based on department, environment, or use case, ensuring that one high-demand application doesn’t drain the entire AI budget.

The platform uses ToolScript, a JSX-inspired markup language that transforms applications into a format easily understood by AI models. This approach ensures that only relevant components, queries, and logic are shared with the models, avoiding unnecessary data overload. Retool also employs agentic tools to retrieve key details, such as database schemas and component patterns, while leaving out sensitive or irrelevant data.

"Our AI builds with users, not for them. Why? Because our users aren't building casual prototypes, they're creating mission-critical apps." – Gabriella Angiolillo and Allen Kleiner, Product & Engineering, Retool

For organizations prioritizing data security, Retool supports self-hosted models through OpenAI-compatible APIs, enabling sensitive data to remain internal.

These integration strategies lay the groundwork for smooth resource management, which ties directly into Retool’s automation features.

Automation Capabilities

Retool leverages its AI integrations to automate tasks like application creation and code refinement. Features like Assist and Ask AI allow users to generate full applications, write queries, and fix code (SQL, JavaScript, and GraphQL) automatically. The platform can even build entire automation workflows based on a single prompt.

To manage usage, Retool enforces token limits: 100,000,000 tokens per hour for Assist, 20,000,000 for Retool Agents, and 250,000 for other AI functionalities like resource queries when using Retool-managed keys. Enterprise users can bypass these limits by using their own API keys but must account for approximately 80,000 input tokens per minute per concurrent user.

"The LLM never sees sensitive data. It works with schemas, column names, and data types, not the records themselves." – Gabriella Angiolillo and Allen Kleiner, Product & Engineering, Retool

Governance and Scalability

Retool ensures transparency and control with AI-generated changes automatically tagged in the application’s version history. This creates a clear audit trail, distinguishing between human and AI contributions. Additionally, AI-generated edits adhere to Retool’s existing security protocols, and administrators can regulate who can create, modify, or trigger specific AI resources and queries.

The platform’s provider-specific resource model enhances scalability by allowing organizations to assign distinct API keys to different teams. This prevents conflicts, improves auditability, and requires no changes to pricing or packaging, making it an easy upgrade for current users.

Pros and Cons

This section outlines the strengths and limitations of various low-code platforms specializing in AI-powered resource allocation, helping you weigh the options based on your organization's needs.

OutSystems stands out for its scalability, leveraging an auto-scaling infrastructure and AI-driven dependency analysis to streamline updates across app portfolios. Its AI-readable blueprint (OML) ensures consistent behavior, and the platform claims it enables organizations to build mission-critical apps 10x faster than traditional methods. Pricing starts at approximately $36,300 per year for 100 internal users. However, its focus on scalability may not suit organizations with simpler needs.

Appian shines in workflow automation, using natural language processing to quickly generate workflows. Its Data Fabric approach integrates data from various sources without creating silos, and built-in "natural guardrails" enhance security by preventing AI from deploying code with vulnerabilities. On the downside, its licensing can be complex, with a minimum requirement of 100 users at around $60 per user per month for the Standard plan.

Mendix emphasizes user experience, offering AI-powered debugging assistants and chatbots to guide users through workflow automation. It provides a free tier and basic plans starting at $998 per month, making it a more affordable option compared to OutSystems. However, like other AI-driven platforms, Mendix is prone to potential "hallucinations", requiring human oversight to ensure accuracy.

Retool offers unmatched flexibility in governance, allowing organizations to assign unique API keys to different teams without altering pricing. Starting at just $10 per builder per month plus $5 per standard user per month for the Team plan, it’s a cost-effective choice for building data-centric internal tools. That said, Retool is less suitable for developing complex, full-stack enterprise applications.

Across the board, these platforms share some common challenges, including limited customization compared to traditional coding, potential security risks if AI-generated code isn’t rigorously checked, and data privacy concerns when handling large datasets.

"Low-code AI... promises to speed application development like never before" - Dan O'Keefe, Appian

Each platform has its own approach to optimizing resource allocation. By understanding their trade-offs, you can better determine which solution aligns with your organizational goals.

Conclusion

AI-powered resource allocation is changing the game for low-code development, shifting it from rigid planning to flexible, real-time optimization. The numbers speak for themselves: 84% of professionals report faster innovation when combining AI with low-code tools, developers complete coding tasks up to twice as fast with generative AI, and by 2026, 75% of new application development will involve low-code platforms, according to Gartner. These trends highlight the importance of selecting a platform that not only speeds up development but also aligns with your specific operational needs.

The key is to choose a platform that addresses your organization’s toughest challenges. For instance, platforms like OutSystems or Mendix are ideal for modernizing outdated systems, Appian ensures compliance, Retool is perfect for quickly building internal tools, and Appsmith offers flexibility for maintaining control.

The potential time savings are impressive - low-code AI platforms can reduce development time by up to 80%. However, the real success lies in identifying and addressing high-friction processes within your business. A practical approach is to run a 2–4 week pilot to test how well a platform performs in your environment. Keep in mind, pricing differences between platforms can exceed $10,000 annually at scale, so it's worth verifying how AI-generated allocations align with your goals. While AI can help streamline implementation, defining specific objectives remains a human responsibility.

To make an informed decision, check out the Best Low Code & No Code Platforms Directory at https://lowcodenocode.org. This resource offers detailed comparisons to help you evaluate platforms based on your resource allocation needs, integration capabilities, and budget. With the global low-code market projected to hit $44.5 billion by 2026, adopting the right platform now positions your organization to harness AI-driven automation before it becomes the industry norm.

FAQs

What does “AI resource allocation” mean in low-code?

In low-code platforms, AI resource allocation leverages artificial intelligence to streamline the management of resources such as personnel, time, and tools. By analyzing data, AI can predict future needs, automate task assignments, and adapt plans on the fly. This approach minimizes manual work, avoids bottlenecks, and ensures resources are utilized effectively, leading to quicker development cycles and improved project results in low-code settings.

How do these platforms control AI token costs?

Platforms handle AI token costs by carefully managing how tokens are used. They often rely on licensing and credit systems to streamline usage. Strategies include monitoring token consumption and cutting down on unnecessary use, which helps keep expenses under control. Many platforms also offer tools that let users track and manage token-related costs more efficiently.

What keeps AI features secure and compliant?

AI systems maintain security and compliance through a combination of strong governance frameworks, regular risk assessments, continuous monitoring, and tailored safeguards. These strategies are specifically designed to address the unique challenges of Low Code/No Code development and AI agent security. By aligning with industry standards and proactively addressing potential vulnerabilities, these measures help minimize risks and maintain trust in AI platforms.